由于疫情,加速了长租公寓的暴雷,某壳公寓频繁传出各种负面新闻,于是把黑猫上关于某壳公寓的投诉内容爬取了下来并进行了分析,就有了这篇完整的数据分析实战项目,从数据获取到数据的分析。

import requests,timeimport pandas as pdimport numpy as nprequests.packages.urllib3.disable_warnings() from fake_useragent import UserAgent

def request_data_uid(req_s,couid,page,total_page): params = { 'couid': couid, 'type': '1', 'page_size': page * 10, 'page': page, } print(f"正在爬取第{page}页,共计{total_page}页,剩余{total_page-page}页") url = 'https://tousu.sina.com.cn/api/company/received_complaints'

header={'user-agent':UserAgent().random} res=req_s.get(url,headers=header,params=params, verify=False) info_list = res.json()['result']['data']['complaints'] result =[] for info in info_list: _data = info['main']

timestamp =float(_data['timestamp']) date = time.strftime("%Y-%m-%d",time.localtime(timestamp))

data = [date,_data['sn'],_data['title'],_data['appeal'],_data['summary']] result.append(data)

pd_result = pd.DataFrame(result,columns=["投诉日期","投诉编号","投诉问题","投诉诉求","详细说明"]) return pd_result

def request_data_keywords(req_s,keyword,page,total_page): params = { 'keywords':keyword, 'type': '1', 'page_size': page * 10, 'page': page, }

print(f"正在爬取第{page}页,共计{total_page}页,剩余{total_page-page}页") url ='https://tousu.sina.com.cn/api/index/s?'

header={'user-agent':UserAgent().random} res=req_s.get(url,headers=header,params=params, verify=False) info_list = res.json()['result']['data']['lists'] result =[] for info in info_list: _data = info['main']

timestamp =float(_data['timestamp']) date = time.strftime("%Y-%m-%d",time.localtime(timestamp))

data = [date,_data['sn'],_data['title'],_data['appeal'],_data['summary']] result.append(data)

pd_result = pd.DataFrame(result,columns=["投诉日期","投诉编号","投诉问题","投诉诉求","详细说明"]) return pd_result

req_s = requests.Session()

result = pd.DataFrame()total_page = 2507

for page in range(1,total_page+1): data = request_data_uid(req_s,'5350527288',page,total_page) result = result.append(data)result['投诉对象']="某壳公寓"result.to_csv("某壳公寓投诉数据.csv",index=False)

result = pd.DataFrame()total_page = 56for page in range(1,total_page+1): data = request_data_keywords(req_s,'某梧桐',page,total_page) result = result.append(data)result['投诉对象']="某梧桐"result.to_csv("某梧桐投诉数据.csv",index=False)

import os,reimport pandas as pdimport numpy as np

data_path = os.path.join('data','某梧桐投诉数据.csv')data =pd.read_csv(data_path)pattern=r'[^\u4e00-\u9fa5\d]'data['投诉问题']=data['投诉问题'].apply(lambda x: re.sub(pattern,'',x))

data.to_csv(data_path,index=False,encoding="utf_8_sig")

result = pd.DataFrame()for wj in os.listdir('data'): data_path = os.path.join('data',wj) data =pd.read_csv(data_path) result = result.append(data)result.to_csv("data/合并后某壳投诉数据.csv",index=False,encoding="utf_8_sig")

data = pd.read_csv("data/合并后某壳投诉数据.csv")

data = data[data.投诉日期<='2020-11-09']print(f"截至2020-11-09之前,黑猫投诉累计收到某壳公寓相关投诉共计 {len(data)} 条")

_data=data.groupby('投诉日期').count().reset_index()[['投诉日期','投诉编号']]_data.rename(columns={"投诉编号":"投诉数量"},inplace = True)

num1 = _data[_data.投诉日期<='2020-01-30'].投诉数量.sum()data0 =pd.DataFrame([['2020-01-30之前',num1]],columns=['投诉日期','投诉数量'])data1=_data[(_data.投诉日期>='2020-02-01')&(_data.投诉日期<='2020-02-21')]

num2 = _data[(_data.投诉日期>='2020-02-21')&(_data.投诉日期<='2020-11-05')].投诉数量.sum()

print(f"2020-11-06当天投诉量{_data[_data.投诉日期=='2020-11-06'].iloc[0,1]}条")

data2=_data[(_data.投诉日期>'2020-11-06')&(_data.投诉日期<='2020-11-09')]

data3=pd.DataFrame([['2020-02-21 ~ 2020-11-05',num2]],columns=['投诉日期','投诉数量'])new_data = pd.concat([data0,data1,data3,data2])

'''配置绘图参数'''import matplotlib.pyplot as plt%matplotlib inlineplt.rcParams['font.sans-serif']=['SimHei']plt.rcParams['font.size']=18plt.rcParams['figure.figsize']=(12,8)plt.style.use("ggplot")new_data.set_index('投诉日期').plot(kind='bar')

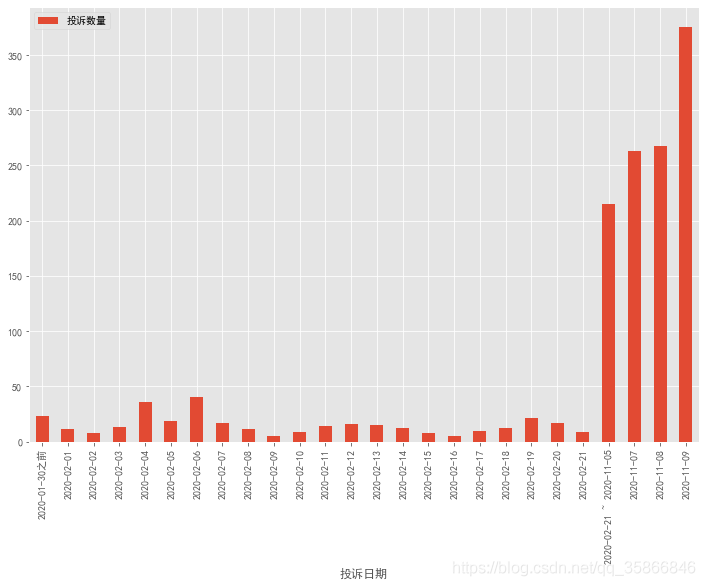

2020-01-30之前属于正常投诉量,偶尔一两单,2月份因为疫情原因,导致投诉量大量增长,可能是因为疫情原因无法保洁,疫情租房补贴之类的,还有被长租公寓暴雷以及某壳破产之类的负面新闻给带起来的租户紧张等等。

2020-02-21之后一直到2020-11-05号投诉量很正常,相比较2020-01-30之前略多,仍在正常经营可接受范围内。

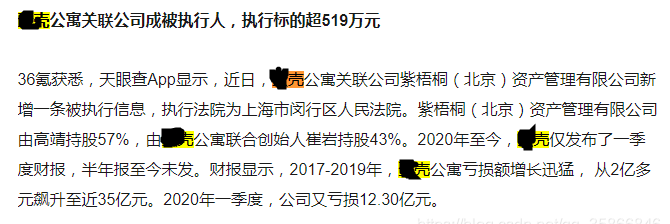

2020-11-06突然骤增了2万4千多条投诉,异常值影响展示,单独剔除出去了,特地去查了一下新闻,看看有没有什么大事儿发生,结果还真有,据36氪报道 2020-11-06某壳公寓关联公司称被执行人,执行标的超519万元。

自此之后的7、8、9某壳在黑猫的投诉每天维持在2-300的日增,看来某壳破产的官方辟谣都是扯淡了,也许并不是谣言,也许网传某壳再现ofo排队讨债并非空穴来风。

以上还是仅仅从黑猫上获取到的投诉数据,投诉无门以及自认倒霉的的用户量又会有多大呢?

接下来就看一下,投诉用户主要投诉的是什么?主要诉求是什么?

import jiebaimport reimport collectionsimport PIL.Image as imgfrom wordcloud import WordCloudimport PIL.Image as imgfrom wordcloud import WordCloud

all_word=''for line in data.values: word = line[4] all_word = all_word+word

result=list(jieba.cut(all_word))

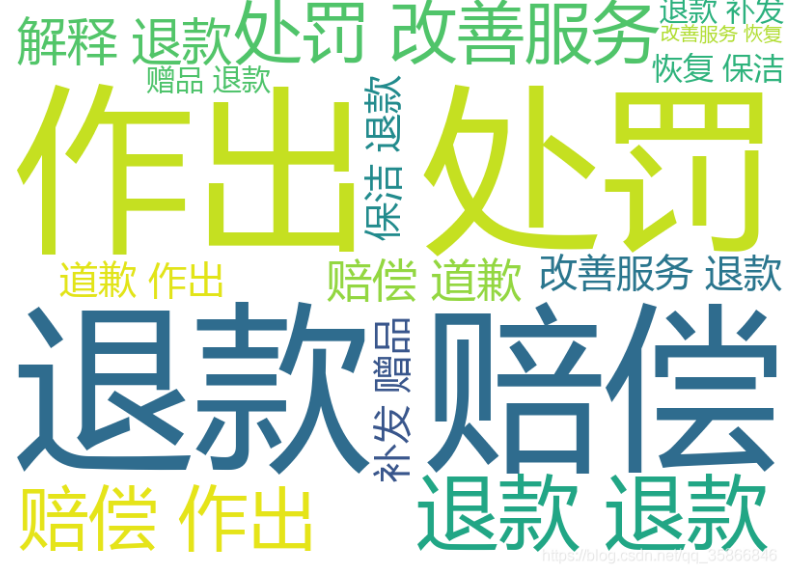

wordcloud=WordCloud( width=800,height=600,background_color='white', font_path='C:\\Windows\\Fonts\\msyh.ttc', max_font_size=500,min_font_size=20).generate(' '.join(result))image=wordcloud.to_image()wordcloud.to_file('某壳公寓投诉详情.png')

all_word=''for line in data.values: word = line[2] all_word = all_word+word

result=list(jieba.cut(all_word))

wordcloud=WordCloud( width=800,height=600,background_color='white', font_path='C:\\Windows\\Fonts\\msyh.ttc', max_font_size=500,min_font_size=20).generate(' '.join(result))image=wordcloud.to_image()wordcloud.to_file('某壳公寓投诉问题.png')

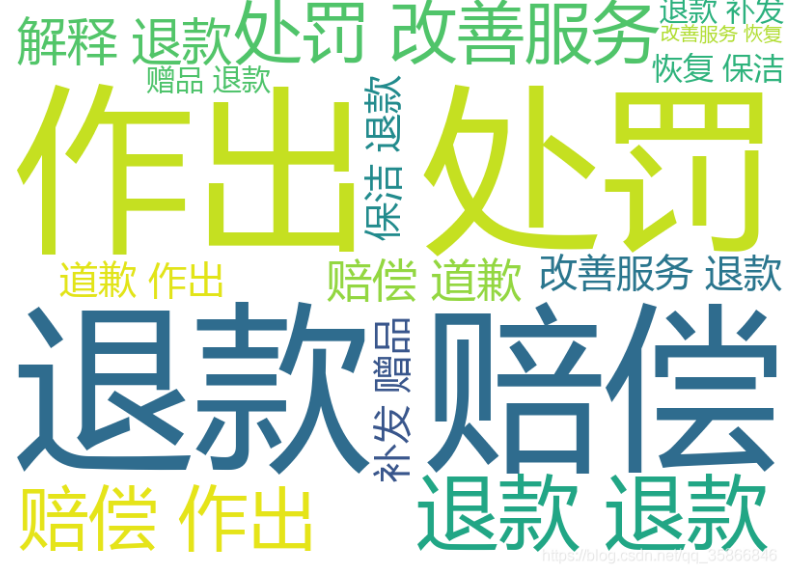

all_word=''for line in data.values: word = line[3] all_word = all_word+word

result=list(jieba.cut(all_word))

wordcloud=WordCloud( width=800,height=600

,background_color='white', font_path='C:\\Windows\\Fonts\\msyh.ttc', max_font_size=500,min_font_size=20).generate(' '.join(result))image=wordcloud.to_image()wordcloud.to_file('某壳公寓投诉诉求.png')

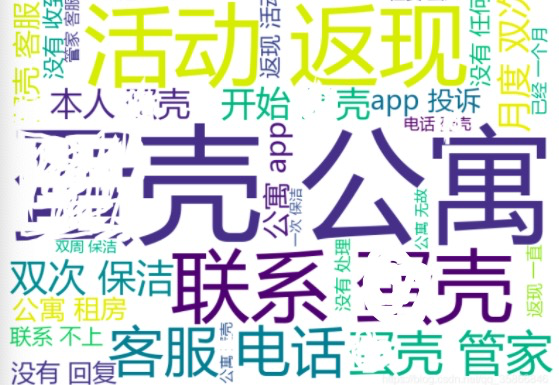

投诉详情可以看出来,主要投诉问题:提现,活动返现(每个月返多少钱,我的除了刚开始两个月正常返现,后面也没按时打款,客服打不通后面就没怎么关注了),主要还有客服联系不上,保洁问题等!也许好好直面问题,投诉可能也没那么多。

投诉用户的主要诉求大家强烈要求对某壳公寓做出相应处罚并要求退款和赔偿。

原文链接:https://blog.csdn.net/qq_35866846/article/details/109601322

文章转载:Python之禅

(版权归原作者所有,侵删)

点击下方“阅读原文”查看更多